Most postmortems produce a document nobody reads and action items nobody ships. That is not an exaggeration. I have watched teams write an hour of prose about an outage, file the document in a Confluence space labeled “Incident Reviews 2024 (deprecated),” and then, eighteen months later, have the same outage again. The postmortem was written. The incident rate did not change.

The gap between writing a postmortem and actually reducing incidents is usually not about blame, discipline, or honesty. It is about format. Most postmortem templates are inherited from Google’s SRE book and then diluted through a dozen companies, each of which added their own fields (“Impact Assessment,” “Communication Timeline,” “Customer Comms Log”) without asking whether the added fields made future incidents less likely. The resulting document is long, ritualistic, and unconnected to any mechanism that would prevent a repeat.

The format I use is deliberately short. Four questions the document must answer, four decisions the team must make, and one feedback loop into engineering planning. That is it. Everything else is optional and mostly gets skipped.

What a postmortem is actually for

Before we get to the format, the premise. A postmortem exists to make the next incident less likely. Not to document. Not to attribute. Not to comply. If the document does not lead to a change in how the system, the process, or the organization behaves, it was wasted hours.

That single criterion cuts most of what lives in typical postmortem templates. A two-page timeline of alerts and Slack messages, reconstructed after the fact, is usually not what reduces the next incident. What reduces the next incident is:

- Understanding the class of problem well enough to recognize the next one early.

- Shipping a concrete change (code, process, or ownership) that removes one of the contributing factors.

- Telling the rest of engineering about the failure mode in a form they can remember.

- Integrating the lesson into the team’s existing planning rhythms so it does not get forgotten.

Those four things map to the four questions that drive my postmortem format.

The four questions

Every postmortem answers these, in this order, in no more than a page or two total.

1. What did the user experience, and for how long?

Not what the system did. What the user saw. This is the lead because the postmortem’s audience (you in six months, the engineer who joins next year, the stakeholder who needs to fund the fix) needs the customer impact framed first to care about the rest of the document.

Good answers to this question are short, concrete, and quantified:

Between 14:17 and 14:52 UTC on 2026-03-15, users attempting to complete checkout saw a generic error message and could not submit orders. The error screen persisted through retries. Approximately 340 checkout attempts failed over this window, representing roughly €28,000 in delayed or abandoned revenue. Mobile traffic was disproportionately affected (roughly 72% of failures were mobile).

Bad answers hedge, technicalize, or understate. “Some users may have experienced intermittent issues with the checkout service” is bad. “Customers could not check out for 35 minutes, and we estimate we lost €28,000 in sales” is good.

This question serves a second purpose. It forces the postmortem owner to look up the numbers. If there are no numbers (no error rate, no affected user count, no revenue estimate), you will learn that fast, and the next postmortem will remember to capture them at the time of the incident.

2. What was the sequence of events, and where did our model of the system break?

This is the timeline, but framed around the team’s mental model, not the alert stream. The question to answer at each step is: what did the on-call engineer believe, and when did that belief turn out to be wrong?

14:17 · First failed checkout attempt (user complaint). 14:19 · Alert fired: checkout error rate above 1%. 14:22 · On-call acknowledged. Assumed database connectivity issue based on recent maintenance. Checked database; found healthy. 14:28 · Checked application logs; saw Stripe webhook timeouts. Assumed Stripe outage. Checked Stripe status page; found all green. 14:34 · Discovered that the webhook signature validation was rejecting requests due to a clock drift on the webhook handler pod. (Mental model broke here: we did not know NTP was failing on that pod, and our dashboards did not expose clock drift.) 14:38 · Rolled the pod. Clock resynced. Checkouts resumed. 14:52 · Confirmed error rate normal.

Formatted this way, the timeline is a story, not a log. The story points at a specific thing: the moment the team’s mental model failed. In this case, “we did not know NTP was failing on that pod” is a specific, fixable gap. It becomes the leading candidate for an action item. “We should improve our Stripe integration” is neither specific nor fixable; it is a vague regret.

The habit this question trains is to capture mental-model failures as first-class data. The first time I introduced this framing in an engineering team, two engineers pushed back because “we already have timelines.” Three months in, they had discovered three mental-model failures that predicted later incidents, and nobody questioned the format again.

3. What were the contributing factors, and why did each exist?

Notice: contributing factors, not root cause. Root cause is almost always a fiction (Richard Cook, “How Complex Systems Fail”: “overt failure requires multiple faults; there is no isolated ‘cause’ of an accident”). Real incidents have four to seven contributing factors, each of which had to be present for the failure to happen. Fixating on a single “root cause” makes the postmortem feel resolved while actually missing most of the available leverage.

The format I use lists each contributing factor as a bullet, followed by a short “why it existed” sentence.

Contributing factors

- The webhook handler pod had NTP disabled. Why: the base image was forked from a utility container that did not need NTP, and nobody noticed the omission during deployment.

- Clock drift was not monitored. Why: our metrics system collected it, but no alert was configured. Clock drift had never caused an incident before, so nobody thought to alert on it.

- The webhook signature validation rejected silently. Why: the Stripe library’s default behavior is to reject invalid signatures with a generic HTTP response; we had not wrapped it with a logger that would have made the specific failure visible in our logs.

- Checkout error messages did not include a request ID. Why: the error renderer was written for consumer-facing readability and stripped internal identifiers. Customer support had no way to correlate user reports with logs.

Four factors, four “why”s. Any one of them, removed, would have either prevented the incident or made it much shorter. That is useful. That gives the team four candidate places to invest effort, ranked by cost and impact.

The “why it existed” sentences are the part that turns contributing factors into fundable work. “Clock drift was not monitored” is a gap; “because we collect the metric but haven’t configured an alert” is a work item of maybe two hours.

4. What are we going to change, and who owns each change?

This is the only part that reduces future incidents. Everything else is context. The section has to be specific, owned, and dated.

Changes

- Add NTP to the base image used by webhook handler pods. Owner: Platform. Target: 2026-03-18. Status: not started.

- Add clock drift alert at +/- 30 seconds on all pods. Owner: Platform. Target: 2026-03-20. Status: not started.

- Wrap Stripe webhook signature failures with a structured logger entry that includes the raw error. Owner: Payments. Target: 2026-03-22. Status: not started.

- Include request ID in checkout error pages (visible to users, correlatable by support). Owner: Checkout. Target: 2026-04-05. Status: not started.

Four items, four owners, four dates. This is the section the engineering manager reviews weekly until everything is shipped. No action item graduates to “done” until there is a merged PR and a short note in the postmortem linking to it. No action item lingers in “in progress” for more than its target date without an explicit explanation added.

This is also the section that kills most postmortems in the field. Teams write twelve action items because they want to be thorough. Two months later none are done, because twelve was unrealistic. The rule I enforce: four action items maximum. If you have more than four candidate fixes, rank them, ship the top four, and put the rest in a follow-up ticket for the team to decide on during normal planning.

The four decisions

The four questions produce a document. The document is necessary but not sufficient. The postmortem meeting (which happens within five working days of the incident) has to close with four explicit decisions.

Was this a class of incident we have seen before? If yes, the postmortem is also a signal that the previous fix did not generalize. That fact gets captured in the document and flagged for the technical leadership review, because “same thing happened twice” is a different category of problem than “one-off failure.”

Does the debt map need updating? If the postmortem surfaced a region or a trail that was not on the team’s technical debt map (or was marked as lower severity than it turns out to be), the map gets updated in the meeting, before the meeting ends. This is the single most important feedback loop in the process, and it is also the one most often skipped. (The debt map format is described in an earlier essay.)

Do we need to share this more broadly? Some incidents teach lessons that other teams should hear. A postmortem writeup destined for a wider engineering audience is different from one destined for the immediate team; it needs more context, less insider shorthand. The meeting decides whether to write the wider version and who owns it.

Is anything about this postmortem itself a lesson? If the incident exposed a gap in how the team handles incidents (paging was slow, the runbook was wrong, the dashboard did not show what we needed), that is a meta-action item. It goes in a separate list, tracked separately, reviewed quarterly.

The feedback loop

The single feature that separates postmortems that reduce incidents from postmortems that do not is this: the action items have to feed back into the team’s normal planning, not live in a separate ticket system that nobody looks at.

The mechanism I use is simple. Every postmortem action item becomes a ticket in the main engineering backlog, tagged with a label (say, from-postmortem). Those tickets enter the normal sprint planning process at a priority that matches the severity of the incident (red incidents promote their action items to the next sprint; orange get placed by the end of the quarter; yellow get handled opportunistically during adjacent work).

This sounds obvious. It is also, in practice, what most teams fail to do. I have seen action item trackers that were separate tabs, separate tools, separate rituals, all of which meant they were separate from the work that actually got done. Put the items where the team already looks, or they will not get looked at.

A second loop: the postmortem informs the technical debt map. Contributing factors that reveal new hazards, or hazards at higher severity than previously thought, get placed on the map in the review meeting. The map is the place where organizational memory of risk lives; if it is not kept current, the next quarter’s planning will underweight the areas that just caused an incident.

What to cut from the usual template

If you have been running postmortems with a longer template, here is what I would cut, and why.

The Executive Summary at the top. It duplicates question 1. Engineers write the summary, then the question, then feel like they are repeating themselves, then the summary becomes vague because they are bored of writing it twice. Pick one. I pick question 1.

The “Detection Timeline” as a separate section. It belongs inside question 2 as part of the story. Pulling it out into its own section creates redundancy and fragments the narrative.

The “Customer Communications Log.” Unless the postmortem is for a regulated industry or a contractual SLA, the customer-facing comms are not what makes the next incident less likely. Keep a reference link to where the comms lived (support ticket thread, status page update) and move on.

The “Severity Classification.” Classifying an incident as SEV-1 vs SEV-2 is useful for the alert routing policy and maybe for quarterly metrics. It is not useful for the postmortem itself; by the time you are writing, the severity is known and the classification is a retrospective label. Keep it in the ticket, not the doc.

The “Lessons Learned” section separate from “Changes we are making.” If the lesson is real, it appears as an action item. If it does not, it is a platitude. “We learned that we should have better alerts” is a platitude unless an action item ships a specific alert.

The worst postmortem I have read

A concrete illustration of what the format prevents. Real example, details changed.

The postmortem was 11 pages. It had a four-paragraph Executive Summary, a six-paragraph Detailed Description, a minute-by-minute timeline covering three hours of Slack messages, and a “Root Cause Analysis” section that concluded with “the root cause was a configuration error.” The Action Items section had 23 bullets, most of them “investigate X” or “consider Y.”

A year later, the same class of incident had recurred twice. I reread the postmortem with the team and asked three questions:

- Which of the 23 action items actually shipped? (Answer: four.)

- Did any of those four address the specific failure mode that recurred? (Answer: no.)

- Where is the configuration error documented as a known failure mode for anyone who would touch that config again? (Answer: nowhere. The knowledge was in the postmortem, which nobody read.)

The document had been thorough in the wrong way. It had created the appearance of analysis without producing any of the outcomes analysis is supposed to produce. We rewrote the postmortem in the shorter format, ended up with three action items (all of which shipped within a month), and added a pinned note to the configuration file itself explaining the class of error. The incident has not recurred since.

Depth is not the virtue. Actionability is. A short, actionable postmortem beats a long, thorough one, every time.

When to skip the postmortem

Not every incident earns one. The rule I use:

- Any customer-visible incident with meaningful impact: always.

- Any incident that was a near miss (a deploy that almost broke prod but was caught during canary): yes, short version, focused on question 2 (where did the mental model break).

- Alerts that fired but had no customer impact and no systemic lesson: no.

- Known intermittent flakes that the team has already decided to accept: no, but periodically review whether the “accept” decision is still correct.

The trap to avoid is postmortem inflation, where every alert generates a document. When postmortems are cheap to skip, the ones that get written get the attention they deserve. When they become mandatory for everything, the format degrades into compliance theater, and the meaningful postmortems drown in the noise.

The cadence that keeps it working

Two cadences keep the format producing results.

Weekly action-item review. Ten minutes in the team’s weekly sync, held by the engineering manager. Every open action item gets a status update. Items overdue without a reason get escalated. Items complete get closed with a link to the PR. The cadence is boring, which is why it works.

Quarterly format review. Once a quarter, the team looks at the last few postmortems and asks: is the format still producing outcomes? If action items are not shipping, if the same class of incident is recurring, if the document is getting longer again, something has drifted. Adjust. The format is not sacred; the outcomes are.

These two rituals, combined, are what turns “we do postmortems” into “postmortems actually reduce our incident rate.” Without them, you have a template and a Confluence space. With them, you have a feedback loop.

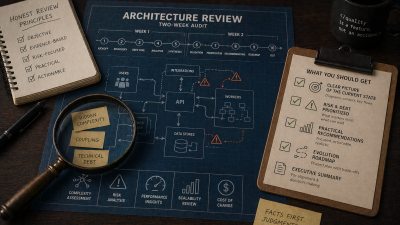

If your team writes postmortems that produce documents but not fewer incidents, my business alignment engagement includes an audit of the current format, the feedback loop into planning, and the technical debt map. Two weeks to reset the ritual, a template calibrated to your team, and the rhythm set up so action items actually ship.

References

- Postmortem Culture: Learning from Failure (Google SRE Book, ch. 15) : the canonical writeup of blameless postmortems, action items, and organizational learning from incidents.

- How Complex Systems Fail by Richard I. Cook : the short essay that argues overt failures always have multiple faults, underwriting the “contributing factors, not root cause” framing.

- Technical Debt Is a Map, Not a Backlog : the companion essay describing the debt-map format that the postmortem feedback loop updates.