Most architecture reviews are theatre. A senior consultant flies in, asks polite questions for two days, produces a 60-page slide deck full of generic recommendations, presents it to the leadership team, and leaves. The team nods along. Two months later the deck is forgotten and nothing has changed.

That is not because the consultant was bad or the team was uninterested. It is because the format is wrong. Architecture reviews fail in predictable ways, and they fail because they are designed to make the buyer feel good, not to make the system better.

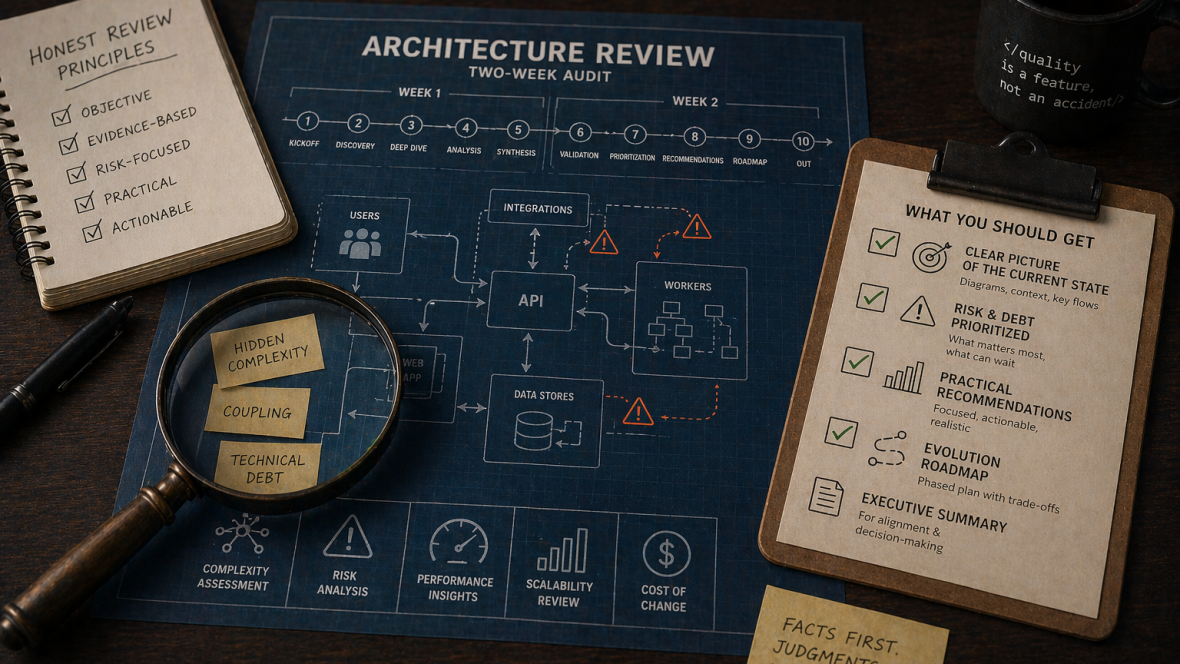

This essay is about what a useful architecture review actually looks like. The ten-day shape I have settled on after running this engagement many times, the artifacts that survive the meeting, and the conversations that make the difference between a deck that gets filed and a roadmap that gets executed.

If you are commissioning a review, this is what to demand. If you are running one, this is the bar I would hold yourself to.

The premise: facts first, judgments last, impact always

Three principles shape everything else.

Facts first. The first half of a useful review is information gathering. Reading code. Reading incidents. Reading commit history. Watching deployments. Talking to engineers about what hurts. Not making recommendations. Not framing things as “best practices.” Just collecting the actual state of the system in enough depth that the recommendations, when they come, are anchored in something specific.

Judgments last. Recommendations are easy to make in the abstract and almost always wrong. “You should adopt CQRS” is a generic recommendation. “The order placement flow has a race condition that fires under campaign load and CQRS would not fix it; what would fix it is a single transactional boundary, here, and we estimate 8 engineer-days” is a useful recommendation. The difference is the work between facts and judgments.

Impact always. Every recommendation carries a cost-of-doing and a cost-of-not-doing, in concrete terms (engineer-weeks, dollars per quarter of incident cost, number of customer-visible failures per month). Without those numbers, the recommendation is unfundable, and an unfundable recommendation is the same thing as no recommendation.

A review that follows these three principles produces an artifact that survives the meeting because the recipients can act on it. A review that does not produces a deck.

The ten-day timeline

Two weeks is enough to do this well. It is also enough that the team will start dreading the engagement if it takes longer. Ten working days, structured.

Day 1: Kickoff

Half a day with the engineering leadership and the executive sponsor. Three things to nail down:

Scope. What system, what concerns, what is out of bounds. “We want a review of the API and the data layer; not the frontend, not the mobile apps.” Specific exclusions are as important as inclusions.

Audience. Who reads the final document. The CTO is a different audience than the board. The format should match. If the review is for a board, the executive summary needs to land in a paragraph; if it is for the engineering team, the depth can go further.

The motivating concern. What worry triggered the engagement? “We are about to scale 10x and want to know what breaks.” “We are considering a rewrite and want a second opinion.” “We had three incidents in three months and we do not know if they are connected.” The honest answer to this question shapes every conversation that follows. If the answer is “the board asked for it and we do not actually know what we want,” that is fine, but say so.

The other half of day one is access. Read access to the repository, to the issue tracker, to incidents and postmortems, to dashboards, to the cloud accounts. If access takes more than a day to set up, the review starts late, which compresses everything that follows.

Days 2-3: Discovery

These are reading days. The aim is to build a mental model of the system before talking to anyone in depth.

The repository. Walk the codebase. Not every file. The high-traffic ones: the controllers, the entry points, the central entities, the message handlers. Look at file sizes (any file over 800 lines is a flag), at directory shapes (any directory with 50+ files is a flag), at the dependency surface (composer.lock, the number of packages, the age of the major versions).

The git history. Last twelve months of commits. What has changed most? Where are the hot files (every project has 5-10 files that are touched in 80% of PRs)? Who is committing to what (a single author dominating a region usually indicates either expertise or risk concentration, often both)?

The issue tracker. Open bugs, recent incidents, the items in the backlog that have been there longest. The ratio of bug tickets to feature tickets is a useful signal. The age of the oldest tickets is a useful signal. The patterns in incident postmortems are very useful signals.

The dashboards. Latency, error rates, throughput, queue depths, database load. What is normal? What spikes? What does the team look at, and what does the team not look at?

By the end of day 3 you should have a one-page summary, in your own notes, of the system shape. Not for sharing yet. For use as the spine of the conversations that come next.

Days 4-6: Deep dive interviews

Three days of conversations. Three to five engineers per day, 60-90 minutes each. The list comes from the leadership team, with one rule: include at least one engineer who is unhappy. Reviews that only talk to the happy engineers produce reviews that only document the comfortable parts of the system.

The questions are not architecture questions. They are work questions.

- What did you ship in the last two weeks? Walk me through it.

- What did you try to ship that was harder than expected? Why?

- What part of the system do you avoid changing if you can?

- If I gave you a week with no feature work, what would you fix first?

- What is the last thing that woke you up at 3am? What did the postmortem look like?

- If you could explain one thing about this system to a new engineer joining tomorrow, what would it be?

- If you had to predict the next major incident, what would it be?

These questions surface the lived experience of the system, which is almost always different from the version told to leadership. The patterns repeat across engineers. By the third interview you can usually predict half of what the next engineer will say. By the eighth or ninth, you have a reliable picture of where the system actually hurts.

Day 7: Analysis

A focused day of synthesis. No meetings. Build the artifacts that the second half of the engagement will rest on.

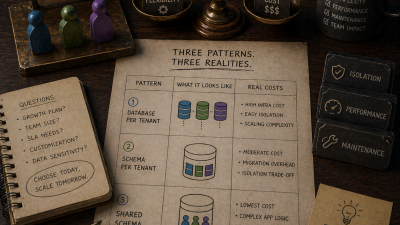

The system diagram. Not the one in the wiki. The one that matches what the engineers actually described. This is usually the moment a quiet flaw becomes loud: the wiki diagram has six clean services; the actual system has three services that are tangled into each other through a shared database and a forgotten middleware that everyone depends on.

The risk register. Pulled from incidents, interviews, and code observations. Each entry: what the risk is, how it manifests, how likely it is to fire in the next six months, what fires it, what blast radius it has. Use the same severity scale you would use for production incidents. Do not invent a custom scale.

The decision log. The major architectural decisions the system reflects. Some of these are documented (as Architecture Decision Records, if you are lucky). Most are not. Reconstruct them from interviews and code archaeology. Mark each as “still load-bearing,” “load-bearing but should be revisited,” or “decision since reversed but artifacts remain.” This list is often the most surprising one for the leadership team to see.

The hotspot map. The 5-10 places in the code where most of the recent change is concentrated and most of the future risk lives. Pull from git, from incident locations, from interview mentions. Adam Tornhill’s Code as a Crime Scene work is the canonical reference for using VCS history to find these hotspots.

By the end of day 7 you have the raw material. The next three days turn it into the artifact.

Days 8-9: Synthesis and prioritization

Two days writing. The format that has worked best for me:

Section 1: Executive summary. One page. Three to five bullets at the top with the headline findings. A paragraph for each. The CTO and CFO read this and nothing else; design accordingly.

Section 2: System overview. What the system actually is. The honest diagram. The bounded context map (or the absence of one). The data model at the level of “what are the central entities and how do they relate.” Two to four pages.

Section 3: Risk register. The top 10-15 risks, with severity, blast radius, cost-of-fixing estimate, and cost-of-not-fixing estimate. This is the section that becomes the planning instrument for the next quarter.

Section 4: Recommendations. Specific, costed, sequenced. Not “consider adopting microservices.” Rather: “Extract the billing capability into its own bounded context, behind a routing seam, with the messaging contract specified in the appendix. Estimated 6-8 engineer-weeks. Reduces blast radius of the order placement race condition. Should be done before the spring campaign load.”

Section 5: A 90-day plan. Three to five concrete moves, ordered, with expected outcomes. The first move should be doable inside the next two weeks; this gives the team an early win and proves the review was not just a deck.

Section 6: Out-of-scope but important. Things that came up during the review that were outside the agreed scope but that the leadership team should know about. Sometimes these are bigger than the in-scope findings.

Day 10: Validation and handoff

Half a day with the engineering team. Walk them through the document. Listen for “that is not quite right” and “you missed this” and “we tried that two years ago and here is what happened.” Update the document live. The engineers’ calibration is the validation step.

The other half of the day with the executive sponsor. Walk them through the executive summary and the 90-day plan. Get explicit yes-or-no on the first three moves. Without the explicit commitment, the document goes into the same drawer as every other consulting deliverable.

That is the engagement. Two weeks in, two weeks out, with a costed and committed plan as the output.

What goes in the document, in order

The order of the document matters as much as the content. The order I use, with the reasoning:

- Executive summary. First, because most readers stop here. If they only read this, they should still walk away with the headline message and the recommended actions.

- What we did and did not look at. Second, because it sets honest expectations. A review that examined the API but not the data layer should say so up front, so the reader does not assume conclusions about the data layer.

- System overview. Third, because it is the shared understanding the rest of the document rests on.

- Risk register. Fourth, because it is what the leadership team will fund against.

- Recommendations. Fifth, because they only make sense in light of the risks.

- 90-day plan. Sixth, because it is the operational handoff.

- Out-of-scope notes. Seventh, because they need to be there but should not distract from the in-scope conclusions.

- Appendices. Eighth: the data behind the diagrams, the full interview list, the incident timeline, the long-form versions of risks.

A document that follows this order is readable in 15 minutes for the executive and in 90 minutes for the engineering team. A document that buries the recommendations in the back is a document that did not respect its readers’ time.

What the recommendations should and should not look like

I have seen enough architecture review decks to have strong opinions about what makes recommendations actionable.

Good recommendations are specific. “Add an index on orders.customer_id to fix the N+1 in the customer history endpoint” is specific. “Improve database performance” is not.

Good recommendations are sequenced. The 90-day plan should make it clear what to do first, what depends on what, and what can wait. A flat list of 30 things is paralysis.

Good recommendations are costed. Engineer-weeks. Dollars. Customer impact. Without numbers, the leadership team cannot fund them, and unfunded recommendations are the same as none.

Good recommendations are reversible. Where possible, recommend moves that the team can undo if they find out something. “Extract the billing capability behind a feature flag and roll it gradually” is reversible. “Rewrite the checkout in Go” is not.

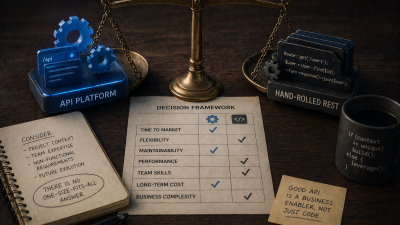

Bad recommendations come from playbooks, not from the system. If the recommendations could have been written without doing the discovery, the discovery did not change them, which means it was not the input to them. This is the single most common failure mode.

Bad recommendations are framed as “best practices.” Best practice means nothing without context. The right architecture for a 12-engineer team is not the right architecture for a 4-engineer team. The right architecture for a 1-million-request-per-day API is not the right architecture for a 10,000-request-per-day one. Generic best practices are how reviews stop being useful.

Bad recommendations are bundled. “Adopt CQRS, event sourcing, microservices, and a service mesh” is six recommendations stapled together, none of which can be evaluated on their own merits. Each needs to stand or fall on its own.

What to push back on, even when the client asks for it

Three patterns where the client wants something specific and the right answer is to push back.

“Tell us whether to rewrite.” This is the question that triggers many reviews. The honest answer is almost always “no, do not rewrite, here is the migration path that gets you most of the value at a fraction of the risk.” Clients sometimes do not want this answer; they have already decided to rewrite and they want validation. The review’s job is to give them the honest answer, with the data behind it, and let them decide. A review that rubber-stamps a rewrite the client has already committed to is not a review.

“Recommend the technology X we have been considering.” Same dynamic. The technology in question may or may not be appropriate. The review’s job is to evaluate it against the actual system, not to validate the prior decision. If the recommendation is “do not adopt X, here is why, here is what to do instead,” that should be in the document.

“Make the deck shorter, with fewer warnings.” The executive sponsor sometimes wants a more positive document because the document will be read by the board, or by investors, or by a customer. The right move is to keep the warnings in the engineering version and produce a separate executive version that is shorter but does not lie. A review that softens its findings to please the audience is worse than no review.

Common failure modes

The reviews that do not produce change tend to fail in the same ways.

The review never makes contact with engineers. The consultant talks only to leadership. The review reflects the version of the system leadership believes exists, not the one engineers work in. Always interview engineers, always include the unhappy ones.

The review documents problems but not consequences. “The order processing has duplicated logic in three places” is a fact, not a problem. Why does it matter? What does it cost? What customer is affected? Without the consequence, the recommendation cannot be funded.

The review recommends things that require approval the client does not have. “Hire a 30-person platform team” is not a recommendation a CTO can act on alone. Recommendations should land within the authority of the people receiving them, or they need to be flagged as requiring escalation.

The review is too long. A 200-page document does not get read. Force yourself to under 40 pages, with most of the depth in appendices. The 40-page version is the one that produces decisions.

The review never gets a follow-up. A review without a 90-day check-in is a review that gets filed. Build the follow-up into the engagement.

What clients should expect to pay for

A two-week architecture review for a system of meaningful size is a real engagement. Expect it to cost the equivalent of two senior engineering weeks, on the consulting side, plus the time of the engineers being interviewed and the executive sponsor. Total exposure: roughly three to four engineer-weeks of organizational time, plus the consulting fee.

This is not cheap. It is also not expensive compared to the cost of the wrong architectural decision, which is often measured in quarters of slowed feature velocity or millions in failed rewrites.

The signal that the review was worth the price is the same in every case: did the system get better, in measurable ways, in the six months that followed? A review that triggered three concrete moves that reduced incident rates, improved deployment frequency, or unlocked a feature the team could not previously ship has paid for itself many times over. (DORA has spent years showing that deployment frequency, lead time, change failure rate, and time to restore are the four metrics that track delivery performance; if a review does not move at least one of them over the following two quarters, the review probably did not land.) A review that produced a deck has not.

When you do not need a review

Not every system needs a review every quarter. Skip it if:

- The system is small (under 30k lines, under 4 engineers) and the team is in agreement about what hurts. You do not need an outsider to tell you what you already know. Spend the money on fixing it.

- You commissioned one in the last six months and the recommendations are still being executed. A second review during execution introduces noise and demoralizes the team. Wait until the first set of moves is done and the picture has changed.

- The leadership team will not act on the findings. A review delivered into a culture that does not fund engineering work will produce zero change. Address the funding culture first; review later.

For everyone else, a focused two-week review every 12-18 months is one of the highest-leverage uses of an external perspective an engineering organization can make.

If you have a system you want a clear, honest, costed view of, my monolith modernisation engagement opens with exactly this two-week review. Two weeks in, a thirty-five page document, and a ninety-day plan with the first move scoped to ship before the engagement ends.

References

- Documenting Architecture Decisions by Michael Nygard : the 2011 blog post that introduced ADRs as a lightweight format for capturing context, decision, and consequences.

- Architecture Decision Record templates and examples : Joel Parker Henderson’s curated collection of ADR templates, including Nygard, MADR, and Tyree variants.

- Code as a Crime Scene by Adam Tornhill : the original essay on using VCS history and logical coupling to find hotspots, expanded in the book of the same name.

- Your Code as a Crime Scene (Pragmatic Bookshelf) : Tornhill’s book applying behavioural code analysis to technical debt prioritisation.

- DORA (DevOps Research and Assessment) : the long-running research program behind the four key delivery metrics that a good review should move over the following two quarters.