Every engineering team I have worked with has a technical debt backlog. Almost none of them ever pay any of it down.

That is not because engineers are lazy or because product management is hostile. It is because the artifact itself is wrong. A backlog of technical debt items, ranked by some flavor of “engineering pain,” is unactionable from the perspective of anyone holding the budget. It looks like a list of expensive favors the engineering team is asking the business to grant them, and so it sits there, growing, while feature work gets prioritized over and over.

The fix is to stop treating technical debt as a backlog of tasks and start treating it as a map of business risk. A map shows you where the dragons are, how close they are to the road, and what happens if you walk into one. A backlog just shows you a list of caves to explore, in no particular order, with no indication of what is inside any of them. (Ward Cunningham, who coined the debt metaphor in his 1992 OOPSLA experience report, always framed it as a risk conversation, not a to-do list. Somewhere between him and the typical Jira board, the framing got flattened.)

This essay is about how to draw that map, how to use it to fund the work, and how to keep it honest as the system evolves.

Why the backlog framing fails

The backlog framing fails for three structural reasons, and they show up almost universally:

- The audience cannot read it. A backlog item that says “refactor

OrderProcessorto remove the static dependency onLegacyTaxCalculator” tells the CFO nothing about whether the company is going to lose customers. It is engineer-to-engineer language, presented to a non-engineering decision maker. - The cost is visible, the risk is not. Backlog items get rough estimates (“two sprints, maybe three”). They almost never get a dollar value attached to not doing them. The conversation becomes “spend two sprints fixing X” versus “ship feature Y,” and feature Y always wins because feature Y has a revenue case attached.

- The list never compresses. Every quarter the team adds new items faster than they remove old ones. After eighteen months the backlog has 400 items and nobody believes the team will ever get through them, including the team. The artifact loses credibility, then it loses attention, then it loses the meeting slot.

The map framing fixes all three. A map is read by the same people who read risk registers and incident reports. A map shows blast radius alongside cost. A map does not need to list every pothole in the city, just the ones that block traffic on the main routes.

What a debt map actually contains

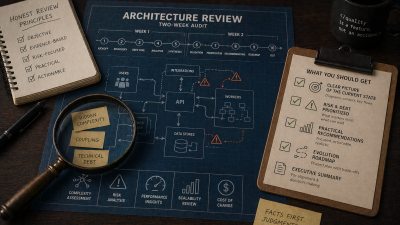

The map I draw for clients has five layers. None of them require fancy tooling. A whiteboard photo is fine for the first version.

Layer 1: The terrain. This is the system at the level of bounded contexts or major modules. Not classes. Not services. The handful of areas a non-engineer would name when asked what the system does. For a typical Symfony monolith I am usually looking at six to twelve regions: ordering, billing, identity, content, search, admin, integrations, and so on.

Layer 2: The trails. These are the customer or revenue paths that cross the terrain. “A new user signs up and completes their first purchase” is a trail. “An invoice is generated and sent” is a trail. Each trail crosses several regions. Trails matter because they are how value moves through the system, and so they are how risk moves through the system too.

Layer 3: The hazards. These are the actual debt items, but placed on the map rather than listed. A hazard has a location (which region, which trail), a severity (critical, significant, manageable, low), and a description that names the business consequence, not the technical condition. “Order confirmation can silently lose the discount code under load” is a hazard. “Method too long” is not.

Layer 4: The blast radius. For each critical or significant hazard, mark which trails get blocked or degraded if the hazard fires. This is the part most teams skip and it is the part the business cares about most. A hazard that blocks the signup-to-purchase trail is in a different category from one that breaks an internal admin export.

Layer 5: The legend. Severity is not subjective. Define the criteria up front so two engineers looking at the same hazard agree on its color.

Here is the legend I use. Adapt the dollar figures and downtime tolerances to your business; the structure is what matters.

- Critical (red). A hazard that has caused, or is highly likely to cause within the next six months, a customer-visible incident measurable in revenue, regulatory exposure, or safety. Recovery requires an unplanned engineering response. Examples: data corruption paths, race conditions in payments, missing authorization checks on tenant-scoped resources.

- Significant (orange). A hazard that materially slows feature work in a region the business has roadmapped for the next two quarters. Recovery is planned, but the cost grows monthly. Examples: a legacy integration that every new feature has to route around, a test suite that takes 40 minutes and so is increasingly skipped, an entity graph that no engineer feels safe modifying.

- Manageable (yellow). A hazard that adds friction but does not block. The team has working patterns to live with it. It deserves to be tracked but not necessarily worked. Examples: inconsistent naming conventions, partial test coverage in a stable area, a deprecated library version that still works.

- Low (green). A hazard with no current business impact. Mostly aesthetic or minor. Documented for completeness, not for action. Examples: outdated comments, formatting drift, unused configuration keys.

The discipline this legend forces is the entire point. If a hazard is “critical” but you cannot describe a customer-visible failure mode, it is not critical, it is significant. If a hazard is “significant” but no roadmapped feature touches its region, it is manageable. The legend gives you a vocabulary that survives the move from the engineering room to the boardroom. (A related distinction worth knowing is Fowler’s technical debt quadrant, which separates prudent from reckless and deliberate from inadvertent debt. Useful when you need to explain to leadership that not all debt is negligence.)

How to actually draw the map

The first time I do this with a team, it takes a week. By the third quarterly refresh it takes a day.

Step one: list the regions. Get the senior engineers and one product person in a room. Whiteboard the regions. Argue about the boundaries. The argument is the work; the diagram is the artifact. You are looking for ten or so boxes that everyone can name.

Step two: trace the trails. Pick the four or five customer journeys that drive the most revenue or cause the most support load. Draw them across the regions as colored arrows. You will discover that one or two regions sit on every trail. Those regions are your structural risk concentrators.

Step three: harvest existing knowledge. You do not need a new audit. You already have one in three places: the incident postmortems from the last twelve months, the “we should fix this someday” Slack messages senior engineers have written, and the items in the existing backlog that have been there for more than two quarters. Triage all three sources into hazards, placed on the map.

Step four: severity-color them. Use the legend strictly. If you cannot articulate the business consequence in a sentence, the hazard is not red. The discipline of writing the consequence sentence is what turns the artifact from an engineering wishlist into a risk register.

Step five: trace blast radius. For each red and orange hazard, mark the trails it sits on. This is the bridge from “engineering is worried” to “the business should be worried.”

At the end of step five you have a one-page artifact that tells you three things at a glance: where the dangerous places are, which customer journeys depend on those places, and how each hazard is likely to fail. That is a map.

What a hazard entry should actually look like

The hazards on the map need a backing document. One per hazard, no more than half a page. The format I have settled on after a few iterations:

### Hazard: Order confirmation silently drops discount codes under load

**Location:** Ordering region, on the signup-to-purchase trail.

**Severity:** Critical (red).

**Business consequence:** Customer pays full price, sees full price in

confirmation email, support ticket follows. Revenue is collected but

trust is lost. We have seen this fire ~12 times per quarter at current

traffic; with the spring campaign traffic forecast to triple, we expect

~36 incidents per quarter, each costing ~30 minutes of support time and

either a refund or a goodwill credit.

**Why it exists:** The discount code is resolved in a separate transaction

from the cart finalization. Under DB contention the second transaction

times out and is silently retried with a stale cart context.

**What removing it costs:** Roughly 2 engineer-weeks. Requires touching

Order, DiscountResolver, and the Stripe webhook handler. Has test

coverage for the happy path; we would add load tests as part of the fix.

**What not removing it costs:** ~36 incidents per quarter at the new

traffic level, ~18 hours of support time, ~$1,800 in refunds. Plus the

trust cost, which is unmodeled.

This format does the job a backlog item cannot. A finance person can read it. A product person can plot it against the campaign timeline. An engineer can scope it. And the cost-of-not-fixing is in the document, in the same units as the cost-of-fixing.

If writing this for every red and orange hazard sounds like a lot, it is. That is the point. If you cannot make the case in half a page, you do not actually have the case yet, and the map will be more honest with the hazard left out than with it included on faith.

How the map gets funded

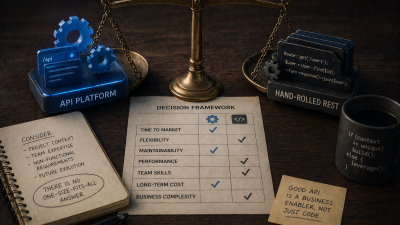

The map is the funding instrument. Once it exists, three things become possible that were impossible with a backlog.

Continuous risk allocation. Instead of asking for “20% of capacity for tech debt,” you ask for budget against the red hazards specifically, with the cost-of-not-fixing on the page. The conversation moves from “engineering wants tech debt time” to “we are looking at $7,200 a quarter in incident cost on the signup trail; do we want to spend two engineer-weeks to remove it?” This is the same conversation the business has about insurance, infrastructure, and compliance. It is fundable in the same way.

Bundling debt with feature work. When a roadmapped feature touches a region with a yellow hazard in it, the feature ticket gets the hazard cleanup attached. The cleanup is not a separate ask, it is a pre-condition for the feature being safely deliverable. This is the single highest-leverage way to actually shrink the map, and it requires the map to exist so that the bundling decision is visible.

Saying no to false debt. Engineers often want to refactor things that are not actually risky, just inelegant. The map gives you a defensible “no.” If the proposed refactor is on a region with no hazards on any trail, it is not earning its budget against the work that is. The map protects the team from itself, in addition to making the case to the business.

How to keep the map honest

A map drawn once and never updated becomes worse than no map, because people start making decisions against a stale picture. Three rituals keep it honest.

Quarterly refresh. Once a quarter, sit the same engineers and product person back down for half a day. Walk the legend. Re-color hazards that have moved (critical to significant if blast radius shrunk; significant to critical if traffic grew). Add new hazards from the quarter’s incidents. Remove resolved ones with a celebration, briefly.

Incident-driven updates. Every postmortem ends with a single question: does this incident change the map? If yes, update the map in the postmortem document, before the meeting ends. Most incidents will reveal that an existing yellow hazard was actually orange, or that a region you thought was safe has a previously invisible trail across it.

Roadmap review. When a new feature is being scoped, the first question is: which regions does it touch, and what hazards live there? If the feature touches a red hazard, the feature is not scoped until the hazard is either fixed first or accepted in writing as a known risk by the feature sponsor. This makes the map an active gate, not a passive document.

What this looks like at six months

A team that adopts the map framing usually shows the same trajectory.

Month one. The map is rough. Severity colors are inconsistent. The first hazard documents take longer than expected to write. There is a lot of “is this red or orange?” arguing, which is uncomfortable but productive.

Month three. The legend has stabilized. The team has retired its first three or four red hazards, two of them as part of feature work, one as a standalone fix. The non-engineering stakeholders are starting to refer to the map by name in roadmap meetings.

Month six. The map is the artifact the team uses to talk about engineering risk in every quarterly planning conversation. The backlog of unaddressed debt items is smaller than it was, not because more was fixed (though more was), but because the items that did not earn placement on the map were quietly closed. The team spends less time arguing about “tech debt budget” and more time deciding which red hazards to retire next.

The change is not that more work gets done. The change is that the work that gets done is the work that matters most to the business, and everyone agrees on what that is.

Common objections, and how to handle them

I have presented this framing to enough teams that I have a short list of the objections that come up.

“This is just a risk register with extra steps.” Yes, partly. The two differences are that it is spatial (hazards are placed on regions and trails, not just listed) and that it is owned by engineering rather than by a separate risk function. The spatial dimension is what lets you see concentrations and bundle debt with feature work. The engineering ownership is what keeps the entries honest.

“Our debt is too tangled to map.” Then the map will be ugly, with overlapping hazards and shared blast radii, and that will tell you something important: your real problem is not the individual hazards, it is the absence of clean regions. Mapping that explicitly is the first step toward fixing it. A messy map is worth more than a clean backlog.

“What about the small stuff?” Leave it off the map. Not every paper cut needs board-level visibility. Yellow and green hazards live in a separate, lighter-weight tracking system, and the team works them when adjacent feature work makes them cheap to address. The map is for the things that justify a conversation.

“This will take time we do not have.” Drawing the first map takes a week. Maintaining it takes a day a quarter. The team is already spending more than that on debt-related conversations that go nowhere. The map redirects that time to a productive artifact.

When this framing does not apply

The map is not always the right tool. Skip it if:

- The team is fewer than four engineers and the system is under 50k lines. At that scale you can hold the map in your head, and the formality is overhead.

- You are six months from sunsetting the system. A debt map for code about to be deleted is not worth the time. Just write down the operational hazards and triage incident-by-incident.

- The organization does not actually fund risk work. If the business has demonstrated, repeatedly, that it will not budget against risk under any framing, the map will not change that. You have a culture problem, not a backlog problem, and a different essay.

For everyone else, the map is the artifact that makes the work fundable, the conversation honest, and the system slowly safer.

If you are looking at a tangled backlog and a system that no longer feels safe to change, my technical debt engagement is built around exactly this map. A two-week mapping sprint, a costed register of red and orange hazards, and a ninety-day plan for retiring the ones that matter most.

References

- The WyCash Portfolio Management System by Ward Cunningham : the 1992 OOPSLA experience report that introduced the technical debt metaphor, framed from the start as a risk conversation.

- Technical Debt by Martin Fowler : bliki entry with Fowler’s thorough rewrite of the concept and further reading on Cunningham’s original framing.

- Technical Debt Quadrant by Martin Fowler : the prudent/reckless and deliberate/inadvertent axes, useful for explaining to leadership that not all debt is negligence.

- Postmortem Culture: Learning from Failure, Google SRE book : the canonical reference for blameless postmortems and incident review as a learning loop.