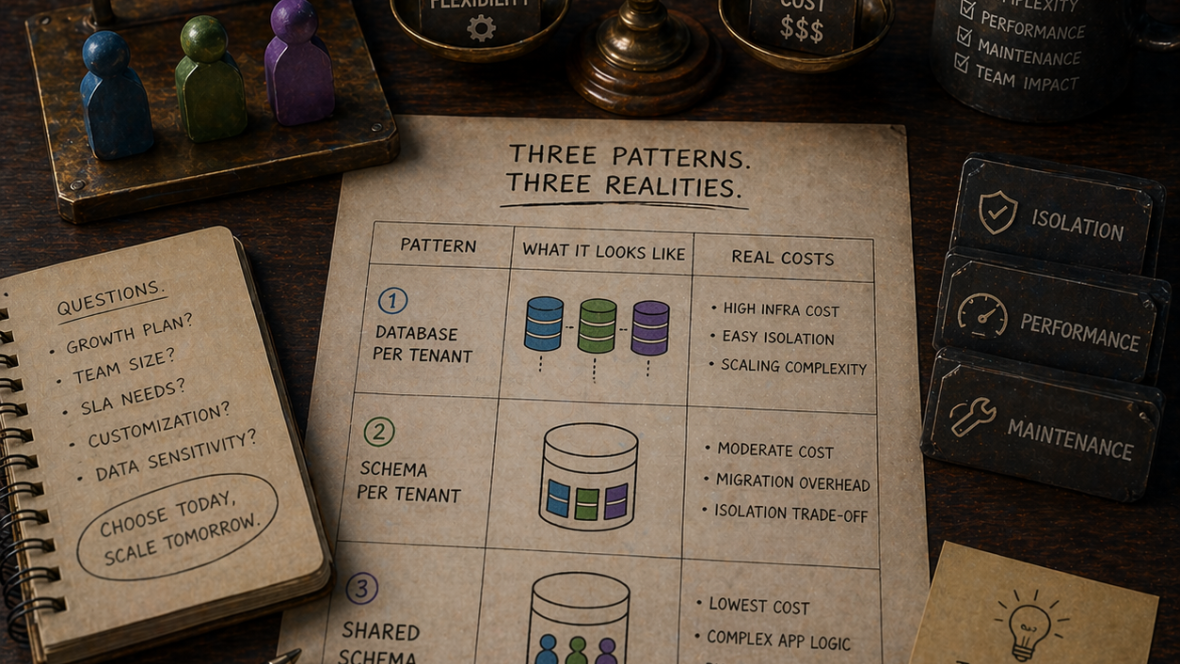

Every multi-tenant architecture decision I have been in the room for starts with the same question: “which pattern is most secure?” That is the wrong question, and answering it produces the wrong architecture. All three of the patterns I am about to describe can be made secure. None of them are secure by default. The more useful question, the one I try to reshape the conversation around, is: “which pattern has the cheapest operations for the shape of business we actually run?”

Because the differences between database-per-tenant, schema-per-tenant, and shared-schema are not really about isolation. Isolation is a byproduct. The differences are about the cost of the operations you will do weekly, monthly, and quarterly, forever, for as long as the system exists. Onboarding a new tenant. Running a migration. Investigating a support ticket. Scaling a hot tenant without disturbing a quiet one. Deleting a tenant for GDPR compliance. Restoring a single tenant from a backup.

This essay walks through the three patterns as I actually evaluate them in engagements, what each one costs to operate, and how to choose. It is Symfony-specific in the mechanics, but the analysis applies to any framework.

What a tenant actually is

Before any pattern, the word. “Tenant” is overloaded. I use it to mean: an isolated set of data and identity that the application treats as the unit of access control. A tenant has users. Users log into a tenant. Data written by one tenant’s users is never visible to another tenant’s users except through explicit, audited sharing.

The tenant is usually a customer (a company buying your SaaS). Sometimes it is a workspace inside a customer (Slack’s “workspace,” Notion’s “team”). Sometimes it is a physical site or a geography. The pattern decisions are the same regardless; the vocabulary changes.

Three things to pin down before writing any code:

- How many tenants do you expect? Ten large enterprise accounts and ten thousand small self-service accounts are different problems. The first is plausibly database-per-tenant. The second is almost certainly not.

- How variable are they in size? If the largest tenant is 1,000x the median, you have a noisy-neighbor problem regardless of pattern, and the pattern choice has to account for it.

- What is the data sensitivity? Healthcare and finance push you toward stronger isolation; a marketing tool does not need the same posture.

These three answers shape the rest. Ten enterprise tenants with 200x size variance in healthcare is a very different shop than two thousand self-service accounts with uniform usage in marketing tech. The right pattern for one is wrong for the other.

Pattern 1: Database per tenant

Each tenant gets its own database instance (or at least its own database on a shared instance). The application connects to a different database depending on which tenant the request belongs to.

What it looks like in Symfony:

doctrine:

dbal:

default_connection: default

connections:

default:

url: '%env(resolve:DATABASE_URL)%'

At runtime, the connection is swapped per request using a RequestStack-aware connection factory or a decorator on the EntityManager. Most teams end up with something like:

final readonly class TenantConnectionFactory

{

public function __construct(

private TenantContextInterface $tenantContext,

private TenantDatabaseRegistryInterface $registry,

) {}

public function forCurrentTenant(): Connection

{

$tenant = $this->tenantContext->current();

$params = $this->registry->connectionParamsFor($tenant);

return DriverManager::getConnection($params);

}

}

The TenantContextInterface reads the current tenant from the request (subdomain, path prefix, header, or authenticated user), and the registry maps tenant IDs to database connection parameters.

What it costs to operate:

| Operation | Cost |

|---|---|

| Onboarding a tenant | Provision a database, run migrations, seed. Minutes to hours per tenant, scriptable. |

| Running a migration | Run it against every tenant database. 10 tenants: 10 migrations. 10,000 tenants: a real job. |

| Investigating a support ticket | Connect to one database, query directly. Easy, localized, no tenant filter to remember. |

| Scaling one hot tenant | Move that database to a bigger instance. Surgical, no impact on others. |

| GDPR deletion | Drop the database. Done. |

| Per-tenant backup restore | Restore one database. Easy. |

| Cost floor | High. Even small tenants cost you a database. |

| Cross-tenant reporting | Hard. Requires federating across databases. |

Where it wins: Ten to a few hundred enterprise tenants. Strict data residency or regulatory requirements (each tenant in a specific jurisdiction). Customers with wildly different sizes or load profiles (the enterprise client that is 500x the next biggest).

Where it loses: Self-service products with thousands of small tenants. The cost per tenant (infrastructure, migration time, operational overhead) makes the unit economics impossible. Running 10,000 migrations is not hard until it is, and “until it is” tends to arrive at the worst possible moment.

Symfony-specific gotcha: Doctrine’s metadata cache is shared across connections by default, which is fine. But the EntityManager itself carries connection-scoped state, so if you swap connections mid-request, you need to clear the manager or instantiate a tenant-scoped one. Get this wrong and you will silently write one tenant’s data into another tenant’s database. I have seen this happen twice. It is the worst outcome of any pattern.

Pattern 2: Schema per tenant

One database instance, one database, many schemas. Each tenant lives in its own PostgreSQL schema (or MySQL database, since MySQL calls them the same thing). The application sets the search_path per request to the tenant’s schema.

What it looks like in Symfony:

final readonly class TenantSchemaListener

{

public function __construct(

private TenantContextInterface $tenantContext,

private Connection $connection,

) {}

#[AsEventListener(event: KernelEvents::REQUEST, priority: 256)]

public function onRequest(RequestEvent $event): void

{

if (!$event->isMainRequest()) {

return;

}

$tenant = $this->tenantContext->current();

$this->connection->executeStatement(

\sprintf('SET search_path TO %s', $this->quoteIdentifier($tenant->schema())),

);

}

}

That is the one-line version. The production version has to handle connection pooling (if the connection is reused across requests, the search_path from the last request leaks), Doctrine migrations that need to target a specific schema, and the edge case where a background job processes messages for multiple tenants on the same connection.

What it costs to operate:

| Operation | Cost |

|---|---|

| Onboarding a tenant | Create a schema, run migrations against it. Fast, scriptable, still per-tenant. |

| Running a migration | One database, N schemas. Running N ALTER TABLEs. Slower than one, much faster than N databases. |

| Investigating a support ticket | Connect to the database, SET search_path, query. One connection, many schemas. |

| Scaling one hot tenant | Hard. The database is shared; one tenant’s load hits everyone’s connections. Vertical scale only, unless you migrate the schema out to its own DB. |

| GDPR deletion | DROP SCHEMA is fast and clean. |

| Per-tenant backup restore | Harder than DB-per-tenant. pg_dump per schema is awkward; restoring a single schema into a running DB is non-trivial. |

| Cost floor | Lower than DB-per-tenant. One database license, N schemas. |

| Cross-tenant reporting | Doable via UNION ALL across schemas, but queries get long fast. |

Where it wins: The middle of the size spectrum. A few dozen to a few hundred tenants, similar-ish in size, where you want cheap logical isolation without the cost of N databases. Frequently the pattern teams pick when they outgrow shared-schema but cannot justify DB-per-tenant.

Where it loses: Anything requiring strong operational isolation between tenants. The database is still one database. A bad query from one tenant locks tables everyone else uses. A slow tenant-specific migration holds locks across the shared pool. Connection pool exhaustion is a shared-fate event.

Symfony-specific gotcha: Doctrine does not have first-class schema-per-tenant support. You end up with a custom naming strategy, a per-request listener, and (almost always) a bug where background jobs run with the wrong search_path because the Messenger handler did not reset it. I recommend this pattern reluctantly and only with eyes open about the Messenger integration cost.

Pattern 3: Shared schema

One database, one schema, one set of tables. Every table that contains tenant-scoped data has a tenant_id column. Every query is filtered by WHERE tenant_id = ?. Security is enforced in application code (and, if you are paranoid, with PostgreSQL row-level security as a belt-and-braces backstop).

What it looks like in Symfony:

The data model has tenant_id on every tenant-scoped entity. Doctrine filters provide the hook for this:

#[AsDoctrineListener(event: 'postLoad')]

final readonly class TenantFilterConfigurator

{

public function __construct(

private EntityManagerInterface $em,

private TenantContextInterface $tenantContext,

) {}

public function configure(): void

{

$filter = $this->em->getFilters()->enable('tenant_filter');

$filter->setParameter('tenant_id', $this->tenantContext->current()->id());

}

}

Where tenant_filter is a Doctrine\ORM\Query\Filter\SQLFilter that adds WHERE tenant_id = :tenant_id to every query on tenant-scoped entities. That is the foreground story.

The background story is: every time you forget to apply the filter, you have a data leak. Filters do not apply to native SQL queries. They do not apply to the QueryBuilder in ways that always surprise junior engineers. They do not apply to EntityManager::find() on tenant-scoped entities unless you configure that explicitly. The application bears the burden of getting it right every single time.

What it costs to operate:

| Operation | Cost |

|---|---|

| Onboarding a tenant | Insert a row in tenants. Done. Milliseconds. |

| Running a migration | Run it once. No per-tenant loop. |

| Investigating a support ticket | SELECT ... WHERE tenant_id = ? in every query. Easy to get wrong in ad-hoc tooling. |

| Scaling one hot tenant | You cannot, really. The tenant shares resources with every other tenant. |

| GDPR deletion | DELETE FROM ... WHERE tenant_id = ? across every table. Slow, error-prone, easy to forget a cascading relation. |

| Per-tenant backup restore | Almost impossible without application-level tooling. pg_dump does not filter by column. |

| Cost floor | Very low. One database, one schema, pay for what you use. |

| Cross-tenant reporting | Trivial. It is all one table. |

Where it wins: Self-service products with thousands of tenants. Similar-size tenants. Low-sensitivity data. Workloads where cross-tenant analytics is a first-class feature, not a compliance problem.

Where it loses: Anything with compliance that asks hard questions about tenant isolation. Anything with wildly variable tenant sizes (the one giant tenant pollutes everyone else’s performance). Anything where the ops team needs to work on one tenant’s data in isolation.

Symfony-specific gotcha: Doctrine filters are easy to disable accidentally. $em->getFilters()->disable('tenant_filter') exists for legitimate reasons (background admin tasks, cross-tenant reports) and is a footgun. I force every team on this pattern to write a static analysis rule that flags any call to disable() and requires explicit justification. Without that rule, someone will disable the filter in 2026 and you will find out in 2028.

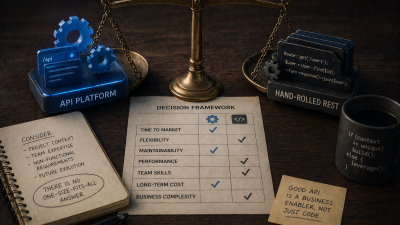

The questions that actually drive the choice

The pattern matrix above is useful, but decisions rarely come down to a single row. The questions that actually decide the pattern, in the conversations I have with founders and CTOs:

How many tenants do you project at 18 months out? If the answer is “ten to thirty,” database-per-tenant is still on the table. If it is “five thousand,” it is not. Nobody runs migrations against five thousand databases per week and stays sane.

Do any of your tenants have their own regulatory requirements? HIPAA tenants and non-HIPAA tenants in the same database is an audit nightmare. Healthcare tenants in their own database, easier. If even one tenant pushes you to DB-per-tenant, everyone goes there or you accept the tooling complexity of running two patterns.

Do you have a largest-tenant problem? If one customer accounts for 40% of the load, they probably need their own infrastructure. That might be DB-per-tenant for that customer specifically (with everyone else on a different pattern). This is legitimate; do not let architectural purity push you away from a pragmatic split.

What does your ops team look like? A two-person platform team cannot run ten thousand databases reliably. They can run one database with ten thousand rows in the tenants table. Match the pattern to the team’s actual capacity to operate it, not the capacity you wish they had.

How often will you run migrations? A team that ships schema changes monthly can tolerate DB-per-tenant better than a team that ships them weekly. Every migration is operational surface; the more you have, the more that surface costs.

How much do your tenants share? If the core feature is “users in different tenants collaborate on shared documents,” the cross-tenant queries that requires make shared-schema almost mandatory. If tenants are truly islands, any pattern works.

The pattern falls out of the answers. It almost never falls out of “which is most secure,” because they are all securable, and the effort you spend on getting isolation right is roughly constant across patterns.

The hybrid patterns that actually win in the field

Most of the multi-tenant architectures I have seen hold up at scale are not pure instantiations of one pattern. They are hybrids, tuned to the shape of the business.

Shared schema with tenant-specific extensions. Core tenant-scoped tables live in shared schema. Tenants that need custom fields get a key-value table (tenant_id, field_name, field_value) or a JSON column. Scales cheaply; customization is possible without touching schema.

Shared schema with premium tenants lifted to dedicated databases. 95% of tenants on shared schema, the top 5% (by size or contractual tier) lifted into their own database. Application code has to know how to connect to either. Ugly but pragmatic.

Schema-per-tenant with a single large “public” schema. Tenants get their own schema for tenant-scoped data; a shared public schema holds cross-tenant reference data (countries, currencies, feature flags). Cleaner than trying to replicate reference data into every tenant schema.

Database-per-tenant for enterprise customers, shared schema for self-service. The most common pattern I see at mature SaaS companies. Two code paths, more ops overhead, but the unit economics work for both ends of the customer spectrum.

The trap with hybrids is the operational burden. Every hybrid is two (or more) patterns, which means two runbooks, two sets of tooling, two failure modes. It is worth it when the customer segments are genuinely different; it is not worth it when you just could not decide.

How to evaluate after you have picked

Once you have picked, evaluate the pattern every six months against the actual numbers:

- Per-tenant onboarding time. If it is growing, the pattern is fighting you.

- Migration duration. If this is over an hour, you are probably running them too infrequently because they hurt, which means your deprecation debt is growing.

- Support ticket investigation time. If support engineers cannot answer questions about a tenant in under five minutes, the ops ergonomics of the pattern are wrong.

- Noisy neighbor complaints. Tenants reporting “the system was slow today” when only one tenant’s workload changed is a signal that isolation is weaker than you thought.

- GDPR deletion latency. Regulators are asking harder questions about this. If a deletion takes weeks because of the pattern, that is a risk that did not exist five years ago.

If any of these metrics drift, the pattern is not necessarily wrong, but the discussion needs to happen. Sometimes you add tooling. Sometimes you lift a tenant into its own database. Sometimes, rarely, you migrate from one pattern to another. That is a major project; do it with eyes open.

Migrating between patterns is a project, not a refactor

One last note, because I get the question at least twice a year. Teams that picked shared-schema and are now feeling the noisy-neighbor pain ask whether they can “move to DB-per-tenant.” Technically yes. Practically, it is a multi-month project.

The work: building a provisioning pipeline for new databases, extending the application to know about multiple connections, writing the migration tooling to carve existing tenants out of the shared schema, carving them out without downtime (expand-contract, same patterns as in my Doctrine migrations essay), updating every query pattern that assumed shared-schema semantics, and re-running every integration test.

I have led this kind of migration twice. Both took four to six months of senior engineering time. Both were worth it because the business had changed enough that the original pattern no longer fit. Do not underestimate the project, and do not undertake it unless the pain is clearly structural rather than fixable with tooling.

The non-answer

If you hoped this essay would tell you which pattern is best, you are reading the wrong essay. None of them is best. The best pattern is the one whose operational cost matches the operational budget of the team running it, whose performance profile matches the shape of the customers it serves, and whose isolation guarantees match the compliance reality of the business.

What you can do is refuse to pick by habit or by ideology. Draw the question matrix. Answer it honestly. Pick the pattern the answers point to. Build it. Revisit the decision every six months against the metrics. That is the practice; the particular pattern that falls out of it is secondary.

If you are picking a multi-tenant pattern for a new product, or living with one that no longer fits, my scaling engagement walks through exactly this decision matrix with your team. One-week diagnostic, a documented pattern choice with the tradeoffs captured, and (if you are migrating) a phased plan that keeps the business running through the transition.

References

- Architectural approaches for storage and data in multitenant solutions (Microsoft Azure Architecture Center) : Microsoft’s catalogue of the same patterns (shared database, sharded, dedicated per tenant) with the antipatterns to avoid.

- Doctrine ORM: Filters : reference for

SQLFilterand theenable/disable/suspendsemantics used in the shared-schema pattern. - PostgreSQL: Row Security Policies : the database-level backstop for shared-schema tenant isolation.

- PostgreSQL: Schemas : documents

CREATE SCHEMA,search_path, and the rules behind the schema-per-tenant pattern. - Zero-Downtime Doctrine Migrations : companion essay on the expand-contract mechanics needed to carve tenants out of a shared schema without downtime.