The first time a team adds caching to a slow Symfony application, the result is usually disappointment. The page is faster, but only sometimes. Some users see stale data. The deploy that should have been routine causes a strange outage two days later when a cache key collision finally triggers. After a month of patches, the cache is responsible for more incidents than the database it was supposed to protect.

I have seen this pattern enough times to be confident about where it goes wrong. It is rarely the technology. The Symfony cache component is fine. Redis is fine. Varnish is fine. The problem is that caching is five separate decisions and most teams treat it as one.

This essay walks through those five decisions in the order I make them when I am sizing up a slow application. Get them right, in this order, and the cache earns its keep. Get them wrong, in any order, and you have built a faster way to be wrong.

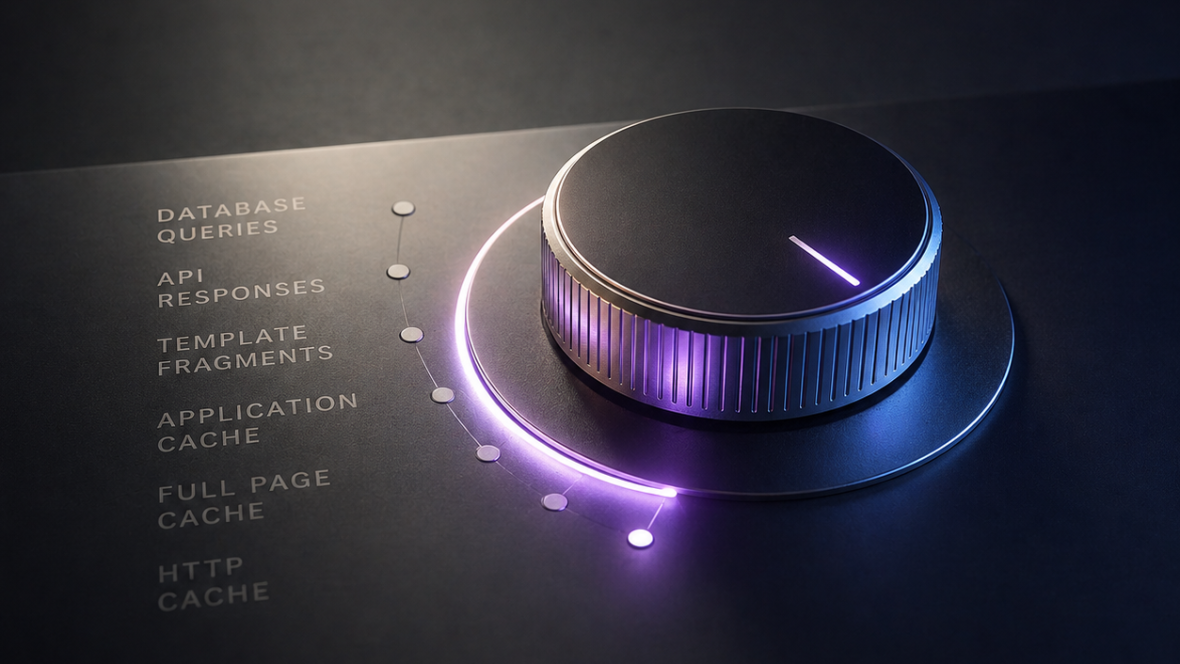

Decision 1: which layer

Before any code, decide where the cache lives. Each layer has a different cost, a different invalidation story, and a different failure mode.

The four layers worth considering, ordered from outside to inside:

- HTTP cache (Varnish, CloudFront, Cloudflare). Caches whole responses. Cheap to operate, scales horizontally, invalidation is the hard part. Best for anonymous traffic and any response that does not depend on the user.

- Application cache (Symfony cache component over Redis or Memcached). Caches whatever your code decides. Most flexible, most code to write. Good for partial responses, computed values, fragments.

- Doctrine cache (result, query, metadata caches). Caches Doctrine’s own work. Set it once, mostly invisible. Good for read-heavy applications where the same queries fire repeatedly.

- PHP runtime caches (OPCache, APCu). Caches compiled PHP and small fast values local to the process. Cheap, fast, very limited capacity. Good for hot config and parsed metadata.

The mistake I see most often is teams reaching for the application cache when the HTTP cache would have done the job for one tenth the code. A homepage for an anonymous visitor that takes 800ms to render needs an HTTP cache, not a clever Redis layer. Conversely, a personalised dashboard that depends on the logged-in user is not a candidate for HTTP caching at all, no matter how clever the Vary headers.

The decision tree:

- Is the response the same for all viewers, or can it be? HTTP cache.

- Is part of the response the same for all viewers, but the rest is personalised? HTTP cache for the static parts, ESI or fragment caching for the rest.

- Is the work being repeated by Doctrine across requests with no parameter change? Doctrine cache.

- Is a specific computed value reused across users with the same input? Application cache.

- None of the above? Probably do not cache. Make the underlying operation faster.

The fifth point matters. Caching is a way of paying complexity to avoid latency. If the latency is not unbearable, the complexity is not worth it.

Decision 2: how to design keys

Once the layer is chosen, the cache key is the next decision, and the one teams underestimate the most. A bad key creates either invisible misses (cache is full of close-but-wrong entries) or invisible collisions (different data ends up under the same key). Both are debugging nightmares.

The rules I follow:

Include every input that affects the output. Locale, tenant ID, currency, role, feature flag state. Forget one, and you serve French content to German users, or admin data to anonymous viewers, until someone notices.

Include the version of the structure. Bump the version when the cached value’s shape changes. Old entries become misses instead of decoded-into-the-wrong-shape errors.

Make the key human-readable. Future-you, debugging a cache issue at 2am, needs to be able to look at a key and know what it is.

namespace App\Cache;

use App\Domain\Identifier\TenantId;

use Webmozart\Assert\Assert;

final readonly class ProductListingCacheKey

{

private const VERSION = 'v3';

public function __construct(

private TenantId $tenantId,

private string $locale,

private string $currency,

private int $page,

) {

Assert::regex($locale, '/^[a-z]{2}(_[A-Z]{2})?$/');

Assert::length($currency, 3);

Assert::greaterThanEq($page, 1);

}

public function toString(): string

{

return \sprintf(

'product_listing.%s.%s.%s.%s.page_%d',

self::VERSION,

$this->tenantId->toString(),

$this->locale,

\mb_strtolower($this->currency),

$this->page,

);

}

}

Why a value object: the inputs are validated once, the format is centralised, and adding a new input is one place to change. The alternative is \sprintf('listing_%s_%s_%d', ...) scattered across five callers, which is the seed of every cache-key bug I have ever debugged.

Decision 3: TTL is a failure budget, not a guess

Most TTL discussions sound like “60 seconds, or maybe 5 minutes, let’s start there and see.” That is fine for a prototype. It is not a strategy.

The right way to choose a TTL is to ask: “How long am I willing for this data to be wrong?”

- A product listing on a public e-commerce page, refreshed every 5 minutes: probably fine. Customers expect catalog updates to take a moment.

- An inventory count on the same page: not fine. Showing “in stock” when it is not is a real customer problem.

- A user profile cached for 1 hour: probably fine.

- An access control decision cached for 1 hour: catastrophic. The user you just removed from the org still has admin access for 59 minutes.

The pattern: short TTLs for security-relevant or business-critical data, longer TTLs for the rest.

Then there is a second consideration that teams miss: stampede protection. If 1,000 simultaneous requests miss the cache at the same instant (because the key just expired), you do not get one expensive recompute, you get 1,000 of them, all writing the same value back. The database falls over, then the cache repopulates, then everything is fine, and you have an unexplained outage.

The Symfony cache component handles this with CacheInterface::get:

namespace App\Catalog;

use Symfony\Contracts\Cache\CacheInterface;

use Symfony\Contracts\Cache\ItemInterface;

final readonly class ProductListing

{

public function __construct(

private CacheInterface $cache,

private ProductRepositoryInterface $products,

) {

}

/**

* @return list<Product>

*/

public function forPage(int $page): array

{

return $this->cache->get(

\sprintf('product_listing.v3.page_%d', $page),

function (ItemInterface $item) use ($page): array {

$item->expiresAfter(300);

return $this->products->paginate($page);

},

);

}

}

The cache contract handles locking internally: only one process recomputes the value while the others wait. That property alone is worth using the contract over the raw PSR-6 interface.

For really hot keys, layer in early expiration:

return $this->cache->get(

$key,

function (ItemInterface $item) use ($page): array {

$item->expiresAfter(300);

$item->tag(['product_listing']);

return $this->products->paginate($page);

},

2.0, // beta: increase early-recompute aggressiveness for hot keys

);

The beta parameter controls probabilistic early expiration. 0 disables it, 1.0 is the default, and higher values refresh earlier on average. Most requests still hit the cache; occasional requests trigger an early refresh so users do not pile up on a cold key.

Decision 4: invalidation is a code path, not a wish

Phil Karlton’s quip about cache invalidation being one of the two hard things in computer science is a cliche because it is true. Most teams underestimate it by treating invalidation as a side effect: “the TTL will catch it eventually.” The TTL will catch most of it eventually. The “most” and “eventually” are where the bugs live.

Three honest strategies, in order of operational cost:

TTL only. Cheapest, least correct. Acceptable when staleness is tolerable and the TTL is short enough to bound the wrongness. The bug pattern: someone changes the data, you and your users are inconsistent for up to TTL seconds.

TTL plus explicit invalidation on write. When the write code knows which keys are affected, invalidate them. Better correctness, more code:

namespace App\Catalog;

use Symfony\Component\Cache\Adapter\TagAwareAdapterInterface;

final readonly class ProductWriter

{

public function __construct(

private ProductRepositoryInterface $products,

private TagAwareAdapterInterface $cache,

) {

}

public function update(Product $product): void

{

$this->products->save($product);

$this->cache->invalidateTags(['product_listing', \sprintf('product_%s', $product->getId()->toString())]);

}

}

Tag-based invalidation lets you target every cache entry that mentioned the changed product, without having to know each key. It costs you a tag-aware adapter and a bit of cache-storage overhead. It buys you correctness that scales with the number of entries.

Event-driven invalidation. When the write does not know which keys are affected (changes that fan out across many caches), an event subscriber listens to domain events and invalidates broadly:

namespace App\Catalog\EventSubscriber;

use App\Domain\Event\ProductPriceChanged;

use Symfony\Component\Cache\Adapter\TagAwareAdapterInterface;

use Symfony\Component\EventDispatcher\Attribute\AsEventListener;

final readonly class InvalidateProductCacheOnPriceChange

{

public function __construct(

private TagAwareAdapterInterface $cache,

) {

}

#[AsEventListener(event: ProductPriceChanged::class)]

public function __invoke(ProductPriceChanged $event): void

{

$this->cache->invalidateTags([

'product_listing',

\sprintf('product_%s', $event->productId->toString()),

\sprintf('category_%s', $event->categoryId->toString()),

]);

}

}

The trade is that the subscriber is now a piece of infrastructure that has to be maintained alongside the write paths. When a new write path is added that should invalidate caches, somebody has to remember the subscriber exists. It is the right call when the alternative is a write path that tries to know about every cache, which is its own kind of brittle.

The pattern that does not work: invalidating immediately before the write. The window between “cache cleared” and “write committed” is where another reader fills the cache with the pre-write value, and now you are stuck with stale data until TTL.

Invalidate after the write is committed, not before.

Decision 5: what not to cache

Caching has a cost. The cost is not just the storage. It is the complexity of every other engineer having to reason about whether they are reading fresh data or not, of every deploy having to think about whether stale data is dangerous, of every bug investigation having to consider “is this a cache issue?”

That cost is worth paying for things that are slow and hot. It is not worth paying for things that are slow and cold, or fast and hot, or fast and cold.

The list of things that I push back on caching:

Anything personalised, where the per-user computation is cheap. The cache key has to include the user, which means it is not actually shared. You have built a per-user storage layer for no benefit.

Anything that already runs in microseconds. A 200-microsecond function is not slow. Caching it adds a network round trip to Redis (~500 microseconds) and saves nothing.

Anything where the source of truth is the cache. If the cache is the only place a value lives, it is no longer a cache, it is a database that loses data on restart. This sounds obvious; I have seen it three times this year.

Anything you cannot describe the invalidation rules for in one sentence. If “when does this entry need to go away” is hard to articulate, the cache is going to be wrong, and you will not know when.

The version of the table that I keep on a sticky note when I am thinking about a new cache:

| Question | Yes | No |

|---|---|---|

| Is the underlying operation slow? | Continue | Do not cache |

| Is the result the same for many viewers? | Continue | Strongly reconsider |

| Can you write the invalidation rule in one sentence? | Continue | Do not cache yet |

| Is the system correct if the cache is empty? | Continue | This is a database, treat it like one |

Three of those need to be “continue” before any caching code gets written.

Putting it together

A worked example: a category page on an e-commerce site, slow because it does six joins across a poorly indexed schema.

- Layer. Public, anonymous, high traffic. The HTTP cache layer is the primary tool. Varnish or CloudFront with a 60-second TTL on the page itself.

- Key design. The HTTP cache key includes the URL, the device class (mobile vs desktop) via Vary, and the locale. A logged-in user request bypasses the cache entirely via a

Cache-Control: private, no-storeheader set in the controller. - TTL. 60 seconds for the HTML, 1 hour for the underlying product images (which are static enough). Stampede protection comes from Varnish’s

gracemode. - Invalidation. The category page is purged from Varnish via the API on three events: a product is added, removed, or its category changes. The CMS triggers the purge as part of its publish flow.

- What we did not cache. The personalised “recently viewed” sidebar, which is rendered as an ESI fragment with its own cache headers, populated per-user from the application cache.

The slow page is now fast for 99% of viewers, the personalised slice is fresh, and the invalidation paths are three lines of code in the publish flow. Total Redis usage: zero. Total team complexity added: small. Total latency removed: about 700ms p95.

That is the shape of caching that pays off. The shape that does not pay off is the one where the team adds a Redis layer because they read a blog post about Redis, and three months later they cannot reason about whether their data is fresh.

Caching is five decisions, not one. Make them in this order, and the cache earns its keep. Make them out of order, or skip one, and you have shipped a new way for your application to be wrong.

If your application has caching that everyone is afraid to touch, our scaling engagement includes a cache audit that maps every caching layer in your stack, identifies the keys without invalidation, and produces a remediation plan for the ones that are causing incidents.

References

- Symfony cache component : the framework-level cache abstraction with stampede protection and tag-aware invalidation.

- Symfony HTTP cache : the reverse-proxy caching documentation, including ESI for fragment caching.

- Doctrine second-level cache : the result and query cache documentation for the ORM.

- Varnish grace mode : the broker-level stampede protection and graceful refresh strategy.

- PSR-6 caching interface : the FIG specification underneath the Symfony cache contract.