A scaling problem almost never arrives as a single dramatic failure. It arrives as a slow, increasingly visible accumulation of small problems: response times creeping up, the cron jobs taking longer than the windows allow, the staging environment refusing to seed because the data set is too big, the deployment that used to take three minutes taking nineteen, the on-call engineer spending more weekends explaining things to the CEO than fixing things in code.

By the time the scaling conversation reaches the leadership team, the team has usually already tried the obvious moves. They added Redis. They moved sessions out of the database. They put Cloudflare in front. They increased the PHP-FPM worker count. None of those moves are wrong, exactly. They are just not the moves that scaling actually requires, and so they buy three weeks of relief before the same symptoms come back, except now also with a Redis cluster to maintain.

This essay is a checklist. Not a list of trendy patterns. Not a list of services you should adopt. Nine items, in the order I would tackle them on a typical PHP/Symfony application that has outgrown its first architecture and needs to be honest about what comes next.

I have ranked them by how often I see teams under-invest in them, with the top of the list being the work that pays back the most and is skipped the most.

1. Get the database boring before you do anything else

The single highest-leverage scaling move on any PHP application is making the database boring. Boring means: predictable query patterns, an index for every hot path, no surprise sequential scans, and no implicit N+1 patterns hiding behind Doctrine relations.

Most teams skip this because the database does not feel slow until it does, and by the time it does, the cost of fixing the patterns is much higher than it would have been six months earlier. The fix is to make slow query analysis a weekly ritual, not an incident response.

The minimum viable instrumentation:

# config/packages/doctrine.yaml

doctrine:

dbal:

profiling: '%kernel.debug%'

# config/packages/monolog.yaml (prod)

monolog:

handlers:

slow_queries:

type: stream

path: '%kernel.logs_dir%/slow_queries.log'

level: info

channels: ['doctrine']

Pair that with pg_stat_statements (or the MySQL slow query log) enabled in production, and look at the top twenty queries by total time once a week. Not the slowest queries. The queries that consume the most cumulative time across all calls. Those are almost always the ones that need an index, not the ones that take 800ms once a day.

Then, on the application side, audit your Doctrine fetch joins. The pattern that kills most Symfony apps at scale is a controller that fetches a paginated list and lazy-loads three relations per row. Twenty rows per page, four queries per row, you are at 80 queries per request before you have done any actual work. Fix it with explicit addSelect() and join() calls in your repositories, and write a test that asserts query counts on the hot endpoints.

2. Identify your three slowest endpoints, and instrument them properly

Most teams do not actually know which endpoints are slow in production. They know which endpoints feel slow when they click around in staging. Those are not the same set.

Set up real per-endpoint metrics. The minimum acceptable is:

- p50, p95, p99 latency per route, per method

- Error rate per route

- Request count per route

Tools that get you there cheaply: Symfony’s built-in profiler (development only), blackfire/blackfire-php-sdk for production sampling, an OpenTelemetry exporter feeding any backend you already use (Datadog, Grafana Cloud, Honeycomb). The tool matters less than the discipline of looking at the numbers weekly.

Once you can see the data, the rule is simple: do not optimize the endpoint that feels slow, optimize the endpoint that takes the most aggregate time across all users. A 200ms endpoint hit a million times a day is a much bigger lever than an 8-second endpoint hit twice.

3. Cache the right thing, not the easy thing

Almost every team I work with has a Redis cluster. Almost no team uses it for the right things.

The right things to cache, in order of leverage:

- HTTP responses with a real cache key. Symfony’s HTTP cache (or a Varnish in front, or the built-in

EsiResponseListenerfor fragments) on routes that can tolerate a 60-second TTL. This is the highest leverage and the most often skipped because “we have a logged-in app.” Most logged-in apps still have public-ish routes (marketing pages, public profiles, search results without filters) that can be HTTP-cached withprivate=falseand a tenant-aware key. - Computed read models. If a request needs to aggregate across five tables to render a dashboard widget, cache the resulting structure for 30 seconds. Use a write-through pattern (invalidate when the source data changes) only when the consistency requirement actually demands it; for analytics-style reads, a TTL is almost always enough.

- Expensive third-party calls. External APIs you do not control are the worst place to be on a hot path. Cache aggressively, with circuit breakers around the cache misses.

The wrong things to cache, in order of how much they will burn you:

- Doctrine query results without a strict invalidation strategy. Doctrine’s second-level cache works in theory, hurts in practice on most applications. The invalidation rules are subtle and the failure mode is “users see stale data they cannot explain.”

- Cache wrappers on top of cache wrappers. APCu in front of Redis in front of Doctrine. Each layer adds a place for the cache to be wrong. Pick one layer per concern.

- Anything you cannot invalidate explicitly when needed. If the only way to clear the cache is to flush all of Redis, you have built a debugging problem, not a performance solution.

4. Move long-running work to a queue, with the right transport

This is the move teams under-invest in by reflex. They keep saying “we will move it to a queue when it becomes a problem,” and then it becomes a problem on a Friday afternoon during a launch.

The work that needs to be queued is anything that:

- Calls an external API on the request path

- Sends an email

- Generates a PDF or processes an image

- Updates more than a few rows in a transaction

- Could be retried safely if it failed

In Symfony 7.4, Messenger gets you most of the way there. The mistake teams make is reaching for sync:// for too long because “we are a small team.” The shape of your code should be message-based even when the transport is sync, because converting later is mechanical, but converting code that relies on synchronous calls into async messages is not.

A reasonable starting transport configuration:

framework:

messenger:

transports:

async_low:

dsn: '%env(MESSENGER_TRANSPORT_DSN)%'

options:

queue_name: low

retry_strategy:

max_retries: 5

multiplier: 3

async_high:

dsn: '%env(MESSENGER_TRANSPORT_DSN)%'

options:

queue_name: high

retry_strategy:

max_retries: 3

multiplier: 2

failed:

dsn: 'doctrine://default?queue_name=failed'

failure_transport: failed

routing:

'App\Messenger\Async\Low\\': async_low

'App\Messenger\Async\High\\': async_high

The two-queue split (low and high) is the part most teams skip. It matters because the moment you have a single queue, a flood of low-priority messages (welcome emails, analytics events) blocks the high-priority ones (password resets, payment confirmations). Splitting them costs nothing and saves you the worst class of incident.

Pick a real broker (RabbitMQ, Amazon SQS, Redis Streams) before you have to. The Doctrine transport is fine for development and the failed queue, but it does not scale to the volumes a busy production application generates.

5. Stop deploying like it is 2016

If your deployment is “SSH in, git pull, composer install --no-dev, bin/console cache:clear, restart PHP-FPM,” you have a scaling ceiling that has nothing to do with traffic.

The minimum acceptable deployment for a production PHP application:

- Build a deployable artifact in CI (a tarball or a container image) with all dependencies installed and the cache pre-warmed.

- Deploy by switching a symlink (with

deployer/deployer) or by rolling a container image (with Kubernetes or ECS), not by mutating the running directory. - Run database migrations as a separate, explicit step, not as part of the application boot.

- Roll the deployment across instances, not all at once. Even if you have two instances, deploy them sequentially with a health check in between.

This matters at scale because the next problem you will hit, after fixing the database and the queues, is that you cannot deploy frequently enough to keep up with the changes the team needs to ship. A deployment that takes 19 minutes and might fail will get done once a day, on a good day. A deployment that takes 90 seconds and is reversible by re-deploying the previous build will get done six times a day, which means each deployment carries less change, which means less risk per deployment, which means more deployments. The whole loop tightens.

6. Make session storage someone else’s problem

Symfony’s default file-based session storage works beautifully on a single server and falls apart the moment you add a second one. Sticky sessions on the load balancer is the wrong fix; it just makes your scaling problem invisible until you need to take an instance out for maintenance and discover that 30% of your active users are pinned to it.

Move sessions to Redis. Use the RedisSessionHandler and configure it explicitly:

framework:

session:

handler_id: 'redis_session_handler'

cookie_secure: auto

cookie_samesite: lax

gc_maxlifetime: 1209600 # 14 days

services:

redis_session_handler:

class: Symfony\Component\HttpFoundation\Session\Storage\Handler\RedisSessionHandler

arguments:

- '@Redis'

- { prefix: 'app:session:', ttl: 1209600 }

Redis:

class: Redis

calls:

- connect: ['%env(REDIS_HOST)%', '%env(int:REDIS_PORT)%']

Two notes that catch teams:

- The session is a hot key. Every authenticated request reads and writes it. Make sure your Redis is sized for it, and consider a separate Redis instance for sessions versus cache so that flushing one does not kill the other.

- Unlike the native PHP file handler,

RedisSessionHandlerdoes not perform session locking, which means concurrent writes can race (the classic symptom is a spurious “Invalid CSRF token” when two AJAX requests fire at once). Either keep session writes idempotent on your hot paths, or wrap the critical sections in a Redis lock. If you need native locking semantics on a relational store,PdoSessionHandlersupportsLOCK_TRANSACTIONALat the row level.

7. Add observability before you need it

When the production problem hits, you will have no time to instrument. You will have time to read graphs that already exist, and time to grep logs you already wrote, and that is it.

The four things every production PHP application needs before it crosses a million requests a day:

- Structured logging. Every log line is JSON, with at minimum: timestamp, level, channel, message, context object, request_id, user_id (or null). Monolog with the JSON formatter, plus a request listener that injects the request_id into the context, gets you there in under an hour.

- Distributed tracing. OpenTelemetry instrumentation across the application boundaries (HTTP in, HTTP out, database, queue). The point is not pretty waterfalls; the point is being able to answer “why was this specific request slow?” without guessing.

- An error tracker. Sentry, Bugsnag, or equivalent. Not a log file. The signal-to-noise ratio of error logs is too low to be useful in incident response.

- A small set of business metrics. Not infrastructure metrics. Metrics like “orders placed per minute,” “checkouts started per minute,” “payment failures per minute.” These are the metrics that tell you whether the customer is having a problem, which is the only definition of “the system is broken” that matters.

The error tracker and the business metrics are the two most often skipped, and they are the two that pay back fastest. Get them in week one.

8. Take security seriously enough to write the policy down

Scaling exposes a security surface that the small-application phase hid. More users, more data, more attack value. Three things that need to exist before you have an incident, not after:

A dependency upgrade cadence. composer outdated --direct --strict runs in CI weekly, with someone owning the upgrades. Symfony LTS line jumped is a project, not an afterthought. PHP version updates are calendared. The reason this matters at scale is that vulnerabilities get found and disclosed, and the gap between disclosure and exploitation has shrunk to days. A team that upgrades quarterly is exposed for a month. A team that upgrades monthly is exposed for a week.

A secrets policy. Secrets live in a secret manager (AWS Secrets Manager, HashiCorp Vault, Doppler), not in .env files committed to anywhere. Symfony’s secrets:set is fine for small teams. The rule is that no secret is in a git history, ever; if one slips, rotate it within 24 hours.

Rate limiting on the auth endpoints. Symfony’s RateLimiter component (and the built-in login_throttling firewall listener for login specifically), applied to /login, /register, and /password-reset, with a token-bucket policy. This is one hour of work that prevents the largest class of credential-stuffing incidents that hit applications when they reach a size that makes them worth attacking.

9. Know what each request costs you

This is the item most teams never get to, and it is the one that decides whether your scaling story is sustainable or whether you wake up one morning to a $40,000 cloud bill.

The minimum: you should be able to answer, in under five minutes, the question “what does it cost us to serve a thousand requests to our most expensive endpoint?”

The moves that get you there:

- Tag every cloud resource with a

serviceandenvironmenttag. Enforce it in Terraform or whatever provisioning tool you use. - Set up a weekly cost report broken down by service. AWS Cost Explorer, GCP Billing Reports, or just a CSV pull into a spreadsheet for a small environment.

- For the top three most expensive services, build a per-request cost estimate. RDS hours per ten thousand queries, S3 storage and request costs per ten thousand uploads, third-party API costs per call. These do not need to be exact. They need to exist as a number.

The reason this matters: scaling is also a pricing decision. If your most expensive endpoint costs 4 cents per call and your customer pays $9.99/month for unlimited calls, your unit economics will eventually catch up to you. Knowing the number means you can either price for it, optimize for it, or rate-limit for it before the bill arrives.

What gets fixed first

If you have a typical PHP application that has reached a few thousand requests a day and is feeling the strain, the order of operations is approximately:

- Week 1: Database analysis, slow query review, top-twenty audit. Add indexes for the obvious wins.

- Week 2: Endpoint instrumentation. Get p50/p95/p99 visible. Pick the top three slow endpoints and dig.

- Week 3: Sessions to Redis if not already done. Move the worst synchronous external API calls to async messages.

- Week 4: Observability stack. Sentry + structured logs + a basic business metric dashboard.

- Month 2: Real deployment pipeline if not already done. HTTP cache on the cacheable routes.

- Month 3: Two-queue Messenger split. Cost tagging and weekly cost report.

You will notice that “rewrite to microservices” is not on this list. That is intentional. Microservices solve organizational problems, not scaling problems, and a team that has not done items 1-4 will not get scaling relief by adding network calls between modules. Do the foundational work first. Then, if you still need to split, do it from a position of operational competence.

When this checklist does not apply

This list is for applications that have outgrown their first architecture but are still small enough that one team can hold the whole picture. Specifically:

- Under 1,000 requests a day: you do not have a scaling problem yet, you have a future scaling problem. Do item 1 (database boring), skip the rest until growth is visible.

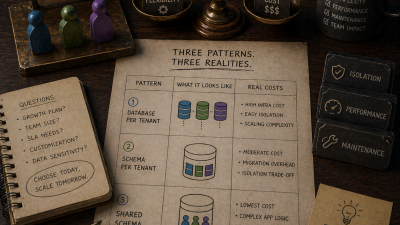

- Multi-tenant SaaS at the enterprise scale: the tenant isolation work dominates everything else, and the queue split needs to be tenant-aware. Different essay, different checklist.

- Heavy real-time needs (websockets, streaming): PHP is not the wrong choice, but the operational profile is different. Mercure or similar carries different concerns than the request-response model this list assumes.

For everything in between, this is the list.

If your PHP application is starting to feel the strain and you want help working through this checklist with a team that has done it before, my scaling engagement is built around exactly this sequence. A four-week diagnostic and remediation sprint, then a sustained partnership through the items that take longer than a quarter to land.

References

- pg_stat_statements (PostgreSQL docs) : the extension for tracking planning and execution statistics for every SQL statement, which is the backbone of the top-twenty review.

- Symfony HTTP Cache : reference for Symfony’s reverse-proxy cache, ESI, and the

Cache-Controlsemantics used on cacheable routes. - Symfony Messenger : the message bus used for async work, including transport configuration and routing.

- Symfony Sessions : handler options including

RedisSessionHandler(no locking) andPdoSessionHandler(configurableLOCK_TRANSACTIONAL). - Symfony RateLimiter : policies (fixed window, sliding window, token bucket) and how to apply them to specific endpoints.

- Symfony Security: login throttling : the built-in firewall-level throttling designed for

/loginspecifically. - OpenTelemetry for PHP : the stable tracing/metrics/logs SDK for distributed tracing across HTTP, database, and queue boundaries.